A typical problem in the life of a computational scientist: your calculations take longer than the wall time in the supercomputer. If the job can be restarted, we can work around that by re-sending it manually but that is quite tedious sometimes.

My solution: use the “resend” BASH script 😉 (which you can download here)

As in the case of the most of my scripts, there is a “-h” option. This helps if you don’t remember the syntax, this option will remind you about the few possibilities.

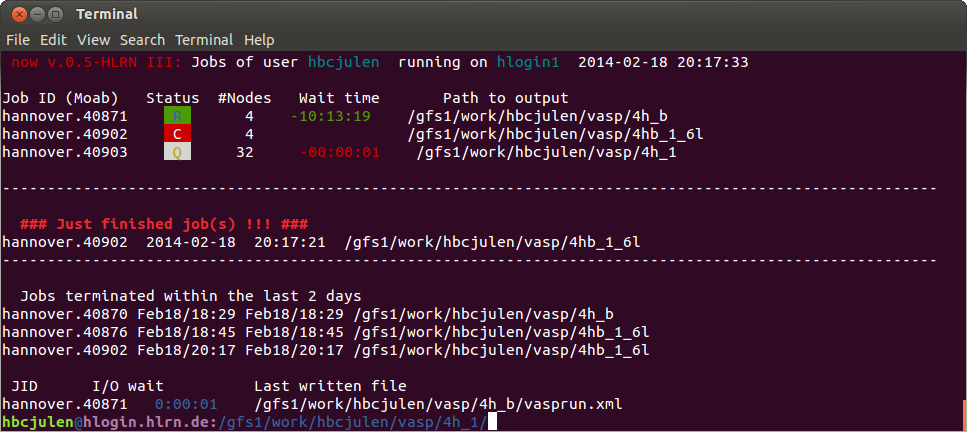

You need the job script you submit to the queue, and the input(s). The script keeps checking the qstat for the current user, and searching for the path from where the resend was executed. Whenever there is no job running or in queue with the same path, it submits another one. If there is a job with the same path, it waits for one minute and tries again.

The resend script is as easy to use as:

user> nohup resend -n 5 -f /path/to/script.cmd &

were “-n” option specifies the number of times the job will be resent (default: 3) and “-f” the path to the batch job script (default: ./job.cmd). It is useful to include the last option in order to be able identify the process (by i.e. “ps”) if necessary.

For killing the script, you can use:

>touch STOP

this will break the internal loop and exit the script.

From the same directory, or just search the PID and kill it.

Another trick: if you already have executed the program, but you notice that you would like to run the job some more times, you can resend it again and the job will be sent as many times as the sum of resend’s both scripts request.